Table of Contents

If you’re a video content creator, you know how crucial it is to engage your audience. Sync Labs promises to elevate your projects with its AI-powered lipsync tool. This innovative service allows any character to speak in multiple languages, making your content widely accessible. Let’s dive into this SyncLabs review and explore what makes it a game-changer for creators everywhere.

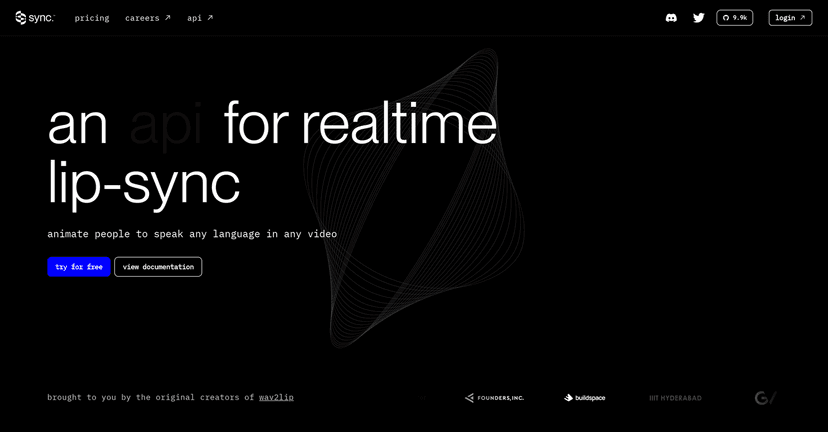

SyncLabs Review

SyncLabs is an exciting solution for video creators seeking to localize their content efficiently. With its advanced AI technology, it allows you to animate characters perfectly synced to speech in various languages. Whether you’re working with movies, podcasts, or even games, Sync Labs proves to be a reliable partner in enhancing viewer engagement. The real-time animation is particularly impressive, giving you the flexibility to cater to diverse audiences. Just imagine the possibilities – your characters can now break language barriers and reach a global audience effortlessly!

Key Features

- API Integration for seamless workflow incorporation

- High-quality lipsync capabilities across diverse video types

- Real-time animation for instant language syncing

- Compatibility with multiple platforms and media formats

Pros and Cons

Pros

- Versatile tool for a wide range of applications

- Supports various content formats for maximum utility

- API-centric design allows ease of integration into existing systems

Cons

- Learning curve for those not familiar with APIs

- Quality relies heavily on the context of the original video

Pricing Plans

While the exact pricing information isn’t provided in this review, you can find the most accurate and updated pricing plans by visiting Sync Labs’ [pricing page](https://synclabs.so/pricing).

Wrap up

In summary, Sync Labs presents a remarkable tool for video creators looking to enhance their content with AI-driven lipsync technology. With its array of features and versatility, it stands out as a valuable asset in the content creation workspace. Just be aware of the learning curve associated with API usage and the quality dependency on original video context. If you’re ready to create engaging, multilingual content, Sync Labs could be the perfect fit for you.