Table of Contents

If you’ve ever asked two different AI models the same question and gotten two completely different answers, you already know the annoying part: guessing which one is actually right. That’s why I like tools that let you compare outputs side-by-side instead of bouncing between tabs and hoping for the best.

OverallGPT is built for exactly that. It lets you view multiple AI model responses in a single place, so you can quickly spot which answer is clearer, more accurate, or just more “on point” for your use case. In my experience, the biggest win isn’t just convenience—it’s that you can evaluate responses faster because you’re comparing them directly, not indirectly.

OverallGPT Review

OverallGPT is a platform for comparing multiple AI model outputs in one view. Instead of asking one model, then asking another, then copying and pasting into a doc, you can put them side-by-side and judge them quickly.

What I noticed right away is how much easier it is to evaluate answers when they’re presented together. You can scan for things like:

- Answer quality (does it actually answer the question, or does it ramble?)

- Clarity (is it formatted well, with readable structure?)

- Specificity (does it give concrete examples, or stay vague?)

- Consistency (does it contradict itself, or stay coherent?)

And honestly, that’s the whole point. If you’re using AI for anything practical—writing, research, planning, troubleshooting—you want the best response, not the first response you got.

If you want to try it, you can start here: OverallGPT.

Key Features

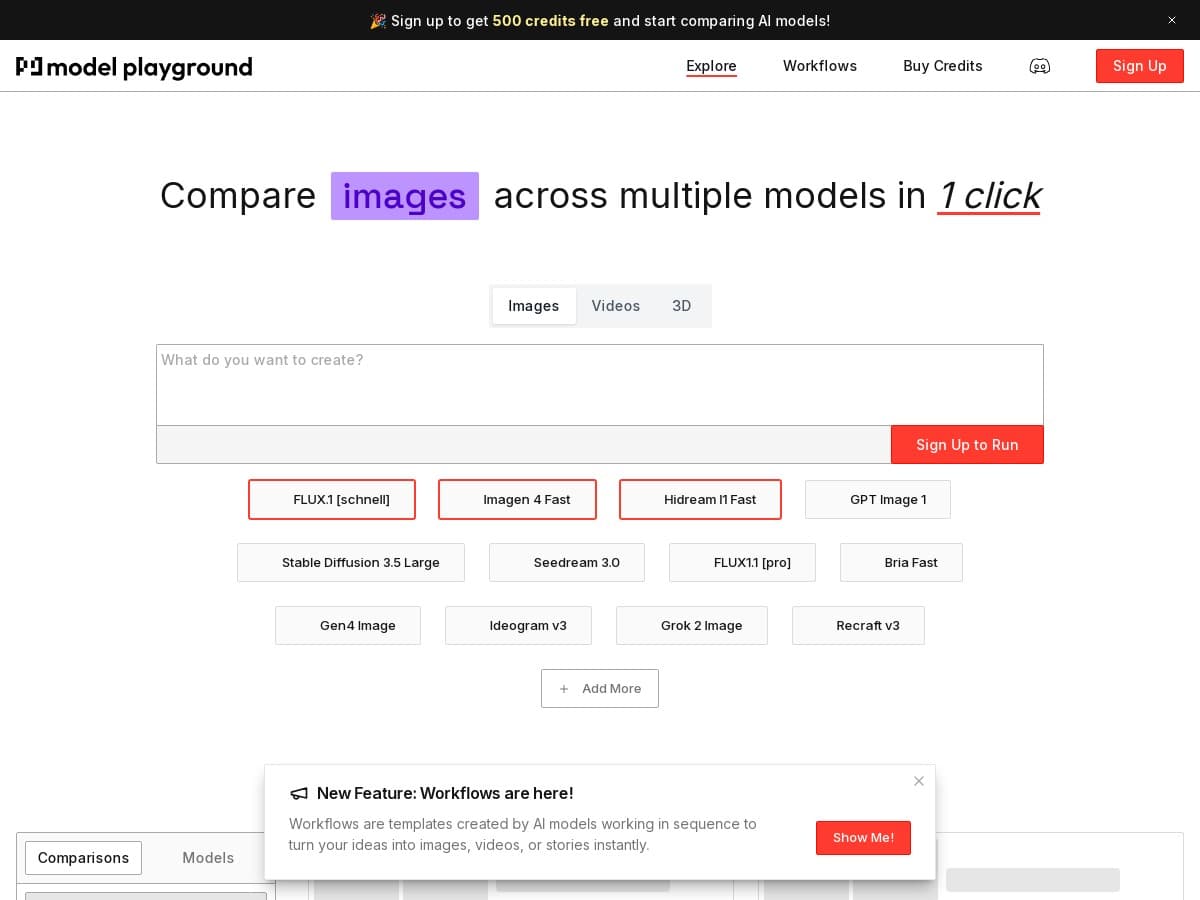

- Side-by-side comparison of multiple AI model outputs

This is the core feature. You can view responses next to each other, which makes it much faster to spot differences in tone, reasoning, and completeness. - Quick evaluation across scenarios

I found this works especially well for “same prompt, different models” testing. For example, if you ask for a marketing email, one model might produce a punchier version while another gives a more structured template. Seeing both at once helps you pick what you want to reuse. - Designed for speed

The interface is geared toward quick checks. When I’m testing prompts, I don’t want to waste time switching contexts. This style of comparison is built for that. - Better understanding of model behavior

Even without getting overly technical, it’s easier to learn what each model tends to do. One might be more concise. Another might be more verbose. That pattern recognition is genuinely useful if you’re iterating on prompts.

Pros and Cons

Pros

- Clear transparency—you can actually compare responses directly, instead of guessing which output is “better.”

- Faster decision-making—when you’re doing prompt testing or content drafting, reducing back-and-forth saves time.

- More practical than single-model workflows—if you rely on AI for real work, comparing outputs is usually smarter than trusting one answer blindly.

- Helps you learn—you start noticing patterns in how different models respond, which makes your future prompting better.

Cons

- Model coverage may be limited—not every AI model available in the wild will necessarily be included. If you’re looking for a very specific model, you’ll want to verify it’s supported.

- You still have to judge the output—the tool can show you comparisons, but it can’t automatically tell you what’s factually correct. You’ll still need to read carefully, especially for anything high-stakes.

- Interpretation takes effort—if you’re not sure what “good” looks like, you might need a bit of practice comparing responses (what to look for, what to ignore).

Pricing Plans

I didn’t see specific pricing details included in the document you provided. For the most accurate and up-to-date pricing (and to check whether there are free trials, limits, or tier differences), it’s best to visit the official site directly.

If you’re comparing tools, I’d also pay attention to things like:

- How many comparisons you can run per day/month

- Whether you can select specific models or if it’s limited by plan

- Any restrictions on output length

- Whether pricing changes based on usage (tokens, requests, or “credits”)

Wrap up

OverallGPT feels like a practical tool for anyone who uses AI regularly and doesn’t want to rely on a single model’s answer. The side-by-side comparison approach is exactly what I want when I’m trying to choose the best response quickly—especially for writing, planning, or general research.

It’s not magic, though. You still need to evaluate the outputs yourself, and you may run into limitations depending on which models are available. Still, if your workflow involves comparing AI responses, OverallGPT is worth checking out.